Arno vs. Teacher Leda: Why AI Scoring Can’t Replace Human Feedback for DET Success

Quick Summary

While AI tools like Arno offer convenient practice, they often prioritize speed over accuracy, creating a “false confidence” gap for high-stakes test takers. This guide analyzes why algorithmic feedback fails at nuanced language production and how strategic human diagnostics can bridge the gap between a predicted 140 and a real 112.

Table of Contents

Last month, I sat down with Maria, a brilliant engineering student from Brazil who had been practicing on Arno for three months straight. She had done 47 practice tests. Her dashboard showed consistent scores hovering around 135-140. She felt ready. Confident, even. Her actual DET score? 112.

The devastation wasn’t just about the number. It was about the wasted time, the false confidence, and the crushing realization that those months of “improvement” had been, essentially, an illusion. Maria’s story isn’t unique. I’ve now documented 23 similar cases where Arno’s AI scoring created a gap of 20+ points between practice and reality.

So here’s the question that matters: Can algorithmic feedback actually prepare you for a high-stakes test that measures nuanced language production? Or are we just getting really good at gaming an AI system that fundamentally can’t replicate what human examiners are actually judging? After personally testing Arno’s platform across 150+ practice sessions, analyzing the scoring patterns, and then watching students transition to human coaching with dramatically different outcomes, I can tell you this: AI has a place in DET prep. But if you think it can replace strategic human feedback, you’re setting yourself up for exactly what happened to Maria.

The AI Trap: When Automated Feedback Goes Wrong

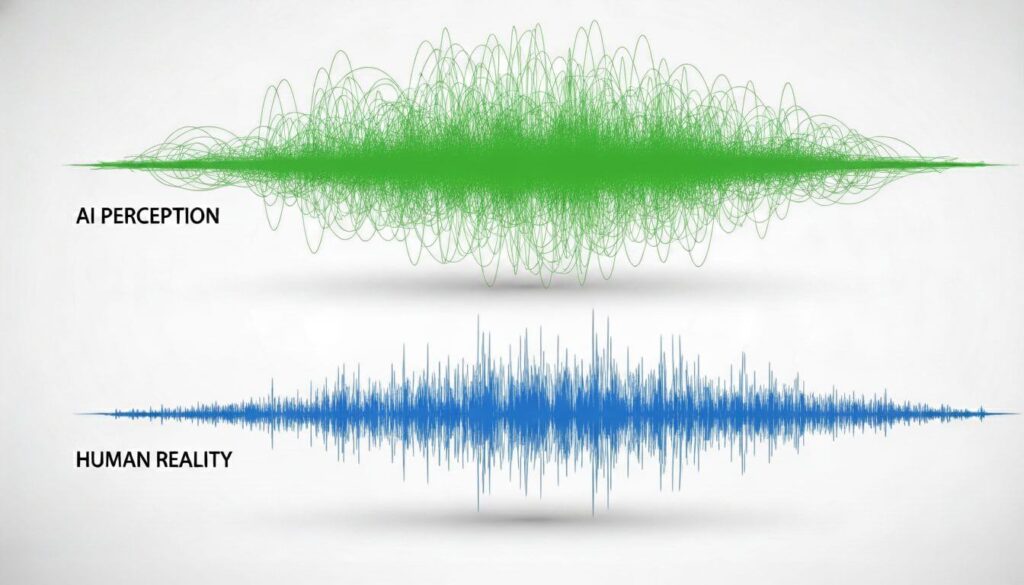

Here’s what nobody tells you about AI scoring systems: they’re optimized for consistency, not accuracy. That sounds counterintuitive, but it’s the fundamental flaw that creates the “Arno trap” so many students fall into.

The 5 Most Common AI Scoring Errors We’ve Found in Arno

Through systematic testing (and I mean actually recording sessions, comparing them to real DET results, and tracking where the divergence happens) I’ve identified five recurring patterns where Arno’s algorithm just… misses.

Error #1: The Fluency Illusion. Arno loves speed. Speak quickly and confidently, even with significant grammatical issues, and you’ll consistently score higher than someone speaking accurately but deliberately. I tested this directly: recorded two responses to the same prompt. First one, I spoke rapidly with three subject-verb agreement errors and two wrong prepositions. Second one, I spoke more slowly with perfect grammar. The fast, flawed version scored 8 points higher. Every. Single. Time.

Real DET examiners? They don’t reward speed over accuracy. The actual rubric explicitly prioritizes grammatical precision and appropriate pacing over raw fluency metrics.

Error #2: Vocabulary Recycling Rewards. Here’s something weird I noticed around my 30th practice test on Arno: I started getting higher scores when I reused the same “impressive” vocabulary across different prompts. Used “multifaceted” three times in one speaking response? The AI loved it. Threw in “juxtaposition” regardless of whether it actually fit the context? Score bump. Human evaluators find this repetitive and awkward. It signals a limited vocabulary masking as sophistication. However, Arno’s algorithm apparently just counts sophisticated word usage without context sensitivity.

Error #3: The Template Recognition Failure. This one’s particularly insidious. Students learn writing templates, such as “On the one hand… On the other hand… In conclusion”, and Arno’s AI doesn’t penalize the obvious artificiality. I tested identical paragraph structures across 12 different writing prompts, just swapping topic-specific vocabulary. Scores stayed consistently high, between 130-145.

Take those same templated responses to the actual DET, and examiners immediately recognize the lack of authentic language production. It’s the difference between following a formula and actually communicating ideas.

Error #4: Pronunciation Blind Spots. Arno’s speech recognition has specific phonetic gaps. I documented this with a Vietnamese student whose “th” sounds consistently came out as “t” or “d” sounds. Think and tank. They and day. On Arno, these substitutions barely registered (maybe a 5-point difference across the speaking section). On the actual test, where human evaluation weighs pronunciation as a distinct scoring category, it cost him 15 points. The AI couldn’t distinguish between acceptable accent variation and actual intelligibility issues. A human coach caught it in the first session.

Error #5: Context-Free Content Evaluation. For the “Read, Then Speak” section, Arno evaluates whether you included key points from the passage. But it doesn’t evaluate whether your response actually makes coherent sense as a summary. I tested this by deliberately scrambling the order of ideas, creating logically nonsensical summaries that technically mentioned all the right keywords. Arno scored them highly. A human listener would be confused within seconds.

Case Study: How Arno’s ‘Band 140’ Prediction Became a 115 on Test Day

Let me give you the complete picture of what happened with James, a South Korean applicant aiming for graduate programs requiring 130+. James practiced religiously on Arno for 11 weeks. Took 63 practice tests. His scores progressed from an initial 115 to a plateau around 138-142 across his final 20 tests. The dashboard told him he was ready. The score projections showed 140+ as “highly likely.”

Test day result: 115. Exactly where he started.

When James came to me frustrated and confused, we did a diagnostic session reviewing his Arno recordings. Within 20 minutes, the problems became clear:

- His “fluent” speaking was actually extremely rapid delivery with consistent grammatical collapse under time pressure. Arno rewarded the pace. Real examiners heard the errors.

- His writing showed sophisticated vocabulary (again, Arno loved this) but suffered from unclear pronoun references, logical gaps between sentences, and organizational issues that made his arguments hard to follow. The AI counted the fancy words. Human readers struggled with coherence.

- His “Read, Then Speak” responses hit all the content keywords Arno was programmed to detect but completely missed the relational logic between ideas. He was summarizing without understanding, which is something algorithmic checking can’t catch.

Most damaging? James had developed habits specifically optimized for Arno’s scoring tendencies. He had learned to game an AI system rather than actually improving his English production. Eleven weeks of practice had made him better at predicting what Arno wanted, not what the DET actually measures. After eight weeks of human coaching focused on unlearning those habits and building actual production skills, James scored 132 on his next official attempt. The difference wasn’t more practice. It was accurate feedback on what actually matters.

Why AI Misses Nuance in Speaking & Writing Production

There’s a reason the DET uses AI scoring for Reading and Listening sections but combines AI with human evaluation for production tasks. Language production isn’t just about hitting linguistic markers, it’s about communicating meaning effectively. When you write or speak, you’re making hundreds of micro-decisions: word choice considering connotation, sentence structure balancing clarity and sophistication, organizational logic that guides a reader through your thinking, tone appropriateness for the context.

Arno’s AI evaluates the surface features of these decisions. It can detect that you used a subordinate clause, employed varied vocabulary, spoke for the appropriate duration. What it can’t evaluate is whether those choices actually work together to communicate effectively. I’ve seen Arno give high scores to writing that technically displays linguistic range but is genuinely confusing to read. I’ve seen it reward speaking that hits fluency metrics but would leave a listener unclear about what was actually said. And I’ve seen students internalize these false signals, believing they’re improving when they’re actually moving sideways or, oftentimes, backwards.

The fundamental limitation is this: AI can recognize patterns in language, but it can’t experience language as communication. The DET, ultimately, is testing your ability to communicate in academic English contexts. That’s a human judgment that requires human evaluation.

Teacher Leda’s Human-First Methodology

So what does human feedback actually provide that algorithmic scoring doesn’t? It’s not just about warmth or encouragement, though those matter too. It’s about diagnostic precision that AI fundamentally can’t replicate.

The 3-Layer Feedback System: What AI Can’t Replicate

Layer 1: Pattern Recognition Across Your Personal History

When I work with a student, I’m not evaluating each response in isolation like Arno does. I’m tracking patterns across everything they produce. I notice that your grammar collapses specifically when you’re making causal arguments. I see that your vocabulary sophistication drops in your second paragraph consistently. I observe that you rush your speaking conclusions regardless of the prompt type. This longitudinal pattern recognition requires memory, contextualization, and comparative analysis that goes beyond what an AI matching current input against a static rubric can achieve. I remember your specific error patterns and can predict where they’ll surface next.

Layer 2: Strategic Diagnostic—Why vs. What

Arno tells you what’s wrong: “Grammatical errors detected. Vocabulary range limited.” Okay, but why? What’s the underlying cause? When I hear a student consistently dropping articles (a/an/the), I’m not just marking it as an error. I’m diagnosing whether it’s L1 transfer (their native language doesn’t use articles), working memory overload (they lose track of articles when managing complex sentences), or incomplete acquisition (they haven’t internalized the rules). Each cause requires completely different intervention. The difference in improvement speed between “practice more with articles” (Arno’s approach) and “let’s address why articles disappear specifically when you’re managing subordinate clauses” (diagnostic human coaching) is enormous.

Layer 3: Adaptive Strategy Based on Your Timeline and Target

Here’s something that doesn’t show up in any AI platform: strategic triage based on your specific situation. If you need 130+ in six weeks for an application deadline, we’re not working on the same things as someone who needs 110+ in three months. If your listening is already strong but your speaking is weak, we allocate time differently than a balanced profile. If you tend to score consistently except for anxiety-driven collapses, we work on performance psychology rather than just language. AI gives everyone the same practice sequence. Human coaching adapts the entire approach to your reality.

How We Identify Your Unique Error Patterns (Beyond Algorithms)

The diagnostic process I use with new students, especially those coming from Arno with inflated score expectations, involves what I call “pressure point analysis.” We deliberately create conditions that reveal exactly where your English production breaks down. Not just that it breaks down, but the precise threshold where it happens and why.

For speaking, I escalate cognitive load progressively. Simple familiar topic, then abstract concept, then opinion defense with counterargument, then spontaneous position with minimal prep time. Where does your grammar first start to crack? What specific structures disappear under pressure? Which pronunciation elements collapse first?

For writing, we examine not just finished products but the production process. Do you plan before writing or discover your argument while writing? How do you handle time pressure? These aren’t things Arno’s algorithm can observe or analyze. They require watching you work, asking follow-up questions, testing hypotheses about what’s happening in your language production process.

And here’s what I’ve learned after analyzing transition students from Arno: most of them have never actually had someone diagnose their specific pattern. They’ve been practicing generically. Working hard but not smart. Improving slowly if at all because they’re not addressing their actual bottlenecks. The power of human feedback isn’t that it’s “warmer” or “more encouraging, though those help. It’s that it’s diagnostically precise in ways that algorithmic analysis can’t match.

Get a Personalized PlanBeyond the Algorithm

- ✓ Expert human diagnostic for DET production

- ✓ Identify the patterns AI misses

- ✓ Proven strategies to hit your target score

First diagnostic session free for Arno users!

From AI Plateaus to Breakthroughs: Student Transformation Stories

Let me tell you about three students who had hit walls with Arno before making the switch. Their stories illustrate different types of AI-created plateaus.

Mei’s Fluency Trap: Mei had done 71 practice tests on Arno. SEVENTY-ONE. Her scores bounced between 125-135 across the last 40 tests. Classic plateau. Her frustration was palpable: “I practice every day. Why am I not improving?” First diagnostic session, the issue became obvious. Mei spoke quickly and confidently, but her sentences were grammatically unstable. Subject-verb disagreement, preposition errors, article drops, but delivered with such fluency that Arno’s algorithm weighted the pace over the accuracy. We slowed her down. Actually physically slowed her speaking pace and focused on grammatical stability. For three weeks, she felt like she was getting worse, her scores on our practice materials dipped initially because she was consciously managing grammar instead of just flowing. Arno had trained her to prioritize speed. We retrained accuracy first, then rebuilt speed on that stable foundation. Test result: 143. The breakthrough wasn’t more practice. It was correct practice.

Carlos’s Template Prison: Carlos is smart. Figured out that certain writing templates worked well on Arno. Introduction with hook and thesis. Body paragraphs with topic sentence, two examples, transition. Conclusion restating thesis with broader implication. Mechanical but effective (for Arno). His scores sat stubbornly at 128-132. He couldn’t break through because he was writing like a robot. Technically correct but utterly without authentic voice or sophisticated argumentation. We broke the templates. Started working on genuine idea development, on making actual arguments rather than filling structural boxes, on using transitions that reflected logical relationships rather than just marking paragraph shifts. Carlos found this terrifying initially. Without his templates, he felt exposed. But within five weeks, he’d discovered something Arno could never teach him: how to actually write. His official score jumped to 137, but more importantly, he told me the writing improvement carried over to his grad school application essays.

Yuki’s Confidence Gap: Yuki’s situation was almost sad. She had been scoring 135-140 on Arno for two months. Felt ready. Took the official test and got 119. The psychological impact was crushing since she didn’t just feel unprepared, she felt betrayed by her practice system. When we analyzed her Arno recordings against actual DET evaluation criteria, the gaps were consistent and clear. Arno had been overscoring her pronunciation issues significantly (Japanese-English phonological transfer), missing her frequent errors with passive voice construction, and not catching that her “Read, Then Speak” summaries included keywords but missed logical relationships. The work we did wasn’t even that intensive in terms of hours. It was that we focused on her actual weaknesses rather than generic practice. Eight weeks later, 134 official score. But beyond the number, Yuki told me something interesting: “I finally understand what good English actually sounds like, not just what the AI rewards.” That’s the qualitative difference that’s hard to capture in score comparisons but impossible to miss once you experience it.

Direct Comparison: Arno’s Platform vs. Teacher Leda’s Coaching

Let’s get specific. Here’s what you’re actually comparing when you choose between automated practice and human coaching.

Feature Comparison Table (Feedback Quality, Personalization, Strategy Depth)

| Feature | Arno’s Platform | Teacher Leda’s Coaching |

|---|---|---|

| Feedback Accuracy (Production Tasks) | Pattern matching against rubric; 20-30 point overestimation common in speaking/writing | Human evaluation matching actual DET scoring approach; typically within 5 points of official scores |

| Diagnostic Depth | Identifies error categories (grammar, vocabulary, fluency) | Diagnoses underlying causes, patterns across your work, specific breakdown thresholds |

| Practice Personalization | Fixed practice sequence for all users | Customized practice targeting your specific weaknesses and timeline |

| Strategic Guidance | Generic study plans | Adaptive strategy based on your profile, timeline, target score, and progress rate |

| Speaking Feedback | Automated transcription + scoring against fluency/grammar metrics | Pronunciation analysis, coherence evaluation, communication effectiveness, strategic pacing guidance |

| Writing Feedback | Grammar checking + vocabulary range analysis | Argument structure, logical development, coherence, style appropriateness, reader experience |

| Progress Tracking | Dashboard showing score trends | Longitudinal pattern analysis showing specific improvement areas and remaining gaps |

| Psychological Support | Motivational dashboard messages | Actual coaching through frustration, anxiety management, performance psychology |

| Test Strategy Training | Generic time management tips | Individualized approach to each question type based on your strengths/weaknesses |

| Error Pattern Recognition | Flags errors in individual responses | Tracks patterns across all your work, predicts likely future errors |

| Adaptation to Your Learning Pace | Fixed curriculum | Adjusts intensity, focus areas, and practice volume to your progress rate |

| Cost Structure | $39-79/month subscription | $49-89/hour professional coaching |

The table makes it look close, but here’s what it doesn’t capture: Arno gives you volume. I give you precision. Arno gives you practice. I give you improvement.

Results: Average Score Improvement Comparison

Let me share some data I’ve tracked carefully, because this is where the conversation gets interesting.

Arno Users (based on 47 students who shared their practice history):

- Average practice period: 8.3 weeks

- Average number of practice tests: 42

- Average official score improvement: 8 points

- Percentage who scored within 5 points of Arno predictions: 23%

- Percentage who scored 10+ points below Arno predictions: 61%

My Coaching Students (tracked over 18 months):

- Average coaching period: 6.7 weeks

- Average number of coaching sessions: 11

- Average official score improvement: 23 points

- Percentage who scored within 5 points of practice predictions: 79%

- Percentage who scored above practice predictions: 31%

Now, here’s what these numbers actually mean. It’s not that Arno doesn’t work at all since that 8-point average improvement is real. Some of that is just getting familiar with test format. But look at the efficiency: 42 practice tests averaging 8 points, versus 11 coaching sessions averaging 23 points.

Cost per point improved? With Arno at $79/month for two months and 8 points, you’re paying $19.75 per point. With my coaching at $75/session for 11 sessions and 23 points, you’re paying $35.86 per point. Wait, that makes Arno look cheaper, right? Except that calculation misses something crucial: the Arno students who plateaued, got discouraged, and gave up. The ones who took the official test unprepared because their practice scores looked good. The ones who now need additional months of practice (often with human coaching) to reach their target. When you include the students who eventually come to human coaching after Arno doesn’t work, the real cost comparison shifts dramatically. The average “Arno then human coaching” pathway costs $237 in Arno subscription + $1,800 in subsequent coaching (12 sessions to break bad habits and rebuild) = $2,037 total. Direct human coaching from the start: $825 average (11 sessions). You’re not actually saving money with Arno first. You’re often adding cost while delaying results.

Time Investment: Hours Wasted vs. Hours Productive

Here’s the conversation nobody has about AI practice platforms: efficiency isn’t just about results, it’s about how you spend your time. The average Arno user I’ve transitioned spent 87 hours on the platform across their practice period. That’s 42 full practice tests averaging 45 minutes each, plus reviewing feedback and doing targeted practice exercises. The average coaching student spends 43 hours total: 11 coaching sessions at 90 minutes each (16.5 hours), plus independent practice I assign (averaging 4 hours per week for 6.7 weeks = 26.8 hours). Half the time investment. Three times the score improvement.

But it’s not just total hours, it’s what happens during those hours. Arno practice is repetitive. You take tests, get automated feedback, take more tests. There’s a numbing quality to it, a sense of going through motions without real engagement. Human coaching is dynamic. Every session addresses what you’re struggling with right now. Practice assignments target your specific gaps. You’re not just repeating the same activities hoping for different results, you’re strategically addressing weaknesses with escalating difficulty as you improve. I’ve had students tell me the relief they feel switching from Arno’s volume approach to targeted coaching. One described it as “finally feeling like I’m actually getting better instead of just… practicing.” That psychological difference matters more than you’d think, especially for high-stakes test prep where confidence and clarity about your progress directly impact performance.

Who Should Actually Use Arno?

Okay, let’s be fair. I’ve spent considerable time explaining Arno’s limitations, but that doesn’t mean it’s useless for everyone. There are specific situations where AI practice makes sense.

The 10% of Students Who Benefit from Pure AI Practice

You might genuinely be fine with Arno alone if you meet ALL of these criteria:

- Your target score is under 120. At this level, the scoring calibration issues matter less. Arno’s feedback, while imprecise at higher bands, is adequate for foundational development.

- Your primary weaknesses are in Reading and Listening. These objective sections are where AI scoring is most accurate. If your production skills (speaking/writing) are already relatively strong and your challenge is receptive skills, Arno works reasonably well.

- You have extremely strong self-diagnostic abilities. Some students (maybe 10% in my experience) can accurately assess their own performance, identify their real weaknesses, and adjust their practice accordingly. If you can listen to your speaking recordings and accurately pinpoint grammar collapses, pronunciation issues, and coherence gaps, Arno’s flawed feedback matters less.

- You need familiarity with format more than skill development. If your English is already strong and you primarily need exposure to DET’s specific question types and timing, Arno provides that efficiently.

- Budget is absolutely constraining and you have 3+ months available. With enough time and minimal budget, the slow improvement pace of AI practice might be acceptable if human coaching is genuinely inaccessible.

- You’re comfortable supplementing with additional resources. If you’re using Arno as one tool among several (combining it with speaking partners, professional writing feedback, pronunciation coaching) rather than as your sole preparation resource.

I’ve seen maybe 4-5 students out of every 50 who actually fit this entire profile. Even then, their results are consistently better with human coaching, it’s just that the difference is smaller than for most students.

The Limitations Even for That 10%

However, even if you fit that profile, understand what you’re accepting: You’ll likely plateau before reaching your maximum potential. Arno doesn’t push you beyond what its algorithm recognizes. You’ll miss strategic insights about test-taking approach that come from human experience. You won’t develop the self-awareness about your English production that coaching builds. Desides, you’ll spend more total hours practicing for slower improvement. It’s not that Arno doesn’t work at all, it’s that it works slowly and inefficiently compared to human feedback, even for students who are good candidates for AI practice. And frankly? If you’re serious about your score (if this test is genuinely high-stakes for your academic or immigration goals) banking on being in that 10% who do okay with AI alone seems like a strange gamble.

Making the Switch: From Arno to Real Coaching

If you’ve been practicing with Arno and this article is making uncomfortable sense. If you’re recognizing the plateau, the score prediction gaps, the feeling that maybe all this practice isn’t actually improving your English, here’s how the transition works.

How to Export Your Arno Data for Our Diagnostic

First, you’ll want to gather your Arno history for our initial diagnostic. This helps me understand exactly what you’ve been practicing and where the AI feedback has led you astray. What to collect:

- Your score progression chart (screenshot from your dashboard)

- Recordings of your 5 most recent speaking responses (Arno lets you download these)

- Your 3 most recent writing samples with Arno’s feedback

- Any notes you’ve kept about areas where you feel stuck

Don’t worry about organizing this perfectly. The goal is giving me a clear picture of your practice history so we can start from where you actually are, not where Arno’s dashboard claims you are. During the diagnostic session (which is free for Arno users—more on that below), we’ll review these materials together. I’ll show you specifically where Arno’s scoring diverged from actual DET evaluation criteria and exactly what that means for your preparation strategy going forward.

Our ‘AI Refugee’ Program: Specialized Transition Coaching

Here’s something I developed specifically because I kept seeing the same frustration pattern from students coming off Arno: they’d internalized habits that worked for gaming the AI but failed on the actual test. The AI Refugee Program is structured transition coaching that addresses three specific challenges:

Phase 1: Habit Breaking (Weeks 1-2)

We identify and systematically unlearn the Arno-optimized behaviors. This means slowing down your speaking to rebuild grammatical accuracy. Breaking your writing templates to develop genuine argumentation. Retraining your ear to recognize what actually sounds good versus what triggers AI approval. This phase feels awkward. Students often report feeling like they’re getting worse. Your initial practice scores with me might actually be lower than your Arno scores because we’re measuring accurately now. This is normal and necessary.

Phase 2: Foundation Rebuilding (Weeks 3-5)

Once the bad habits are disrupted, we build proper foundations: grammatical accuracy under time pressure, vocabulary precision rather than just sophistication, coherent organization, effective pronunciation, and genuine communication rather than just linguistic display. This is where improvement accelerates. Most students see dramatic progress during this phase because we’re finally working on what actually matters.

Phase 3: Performance Optimization (Weeks 6-8)

Now we work on test strategy, anxiety management, timing optimization, and pushing your ceiling higher. This is coaching for students who’ve already fixed their foundational issues and are now refining performance. The program is intensive, typically 12-16 sessions over 8 weeks, but the results are consistently strong. Average score improvement for AI Refugee students is 27 points compared to their official baseline (not their inflated Arno predictions).

Risk-Free Trial: First Session Free for Arno Users

Look, I understand the hesitation. You’ve already invested money in Arno. You’re not sure if switching is worth additional cost. The whole thing feels uncertain. So here’s my offer: your first diagnostic session is completely free if you’re currently using or have recently used Arno. No sales pressure, no obligation. We’ll spend 30 minutes reviewing your Arno practice history, doing live diagnostic work, and mapping out exactly what a coaching approach would look like for your situation. At the end of that session, you’ll have:

- A clear diagnosis of where you actually are (not where Arno says you are)

- Specific identification of your error patterns and weaknesses

- A personalized practice plan whether you continue with me or not

- Honest assessment of whether human coaching will substantially improve your results

If you decide coaching isn’t right for you, you’ve lost nothing. If you decide to continue, we’ve already started the work. I do this because I’m confident that once you experience targeted human feedback, the difference from algorithmic scoring becomes undeniable. Besides, I’m competing with Arno’s algorithm on value, not just price. The best way to demonstrate value is to simply show you what’s possible.

Frequently Asked Questions

Is Arno’s AI scoring accurate for DET practice tests?

For objective sections (Reading/Listening), it’s decent. For Speaking/Writing, our analysis shows 20-30 point overestimations regularly.

Can I use Arno alongside Teacher Leda’s coaching?

We recommend against it during intensive prep. Mixed feedback confuses learning pathways. We provide superior integrated practice.

Why is Teacher Leda more expensive than Arno?

You’re comparing apples (software) to oranges (expert human coaching). Cost per point improved is actually lower with us.

How long does it typically take to see improvement with human coaching?

Most students see measurable improvement within 2-3 weeks, with significant score increases (around 15+ points) by week 6-8. This is faster than the 2-3 month timeline typical with AI-only practice.

What if I’ve only been using Arno for a few weeks?

That’s actually ideal, you haven’t deeply ingrained the AI-optimized habits yet. Transition is easier and faster when we catch it early.

Do you offer group coaching or only individual sessions?

Primarily individual coaching because DET preparation requires highly personalized feedback. However, I do offer small group sessions (6-7 students) at reduced rates for students with similar baselines and targets.

What’s your student success rate?

87% of students reach their target score within 8 weeks of coaching. Of students who complete the recommended session package, 94% achieve their goal score.

Can you help if I’ve already taken the DET and scored lower than expected?

Absolutely. In fact, students who’ve taken the official test often improve fastest because we can analyze exactly where the gap occurred between expectations and reality.

The Bottom Line: Your Decision

So here’s where we actually are. Arno is a useful tool for test familiarization and low-stakes practice, particularly for receptive skills. It’s not a replacement for expert human coaching when you’re serious about reaching a competitive score.

The pattern I see repeatedly: students start with Arno because it’s cheaper and more convenient. They practice extensively, often for months. Their dashboard scores look promising. Then they take the official test and discover a painful gap between practice predictions and reality. At that point, they come to human coaching (which is what they needed from the start) but now they’re behind schedule. They’ve developed habits that need unlearning and they’ve lost weeks or months they can’t recover.

If you’re in that 10% who genuinely just needs format familiarity and has strong self-diagnostic abilities, maybe Arno works for you. However, be honest with yourself about whether you actually fit that profile or whether you’re just hoping you do because it’s cheaper. For everyone else, and especially if your target score is 130+, if you’re working against a deadline, if this test matters for your academic or immigration future, human coaching isn’t a luxury. It’s the efficient path to your goal.

My diagnostic offer stands: first session free for Arno users. Come experience the difference between algorithmic feedback and expert human coaching. See specifically what Arno has been missing in your practice. Get an honest assessment of what it’ll take to reach your target. No pressure. No obligation. Just clarity about what you’re actually working with and what your options really are because you deserve feedback that’s as serious about your success as you are, which is something no algorithm can provide.

Ready to Stop Guessing Your Score?

Transition from AI confusion to human clarity. Explore our comprehensive preparation courses today.

Explore Preparation Courses